We follow an agile software development process that has been revisited, updated, and tweaked over the 13 years we've been in business. We are happy with the process we have now, but we're always trying to improve it. If you see inefficiencies or blind spots, please let us know in the comments below.

Planning and Project Management

Stories and Bug Fixes

The business, usually through a single product manager, is primarily responsible for writing up new stories. The content should be enough for the author to remember what he/she was talking about when asked for details in the future. Once the placeholder story comes up in the work cycle, the delivery team gets with the relevant stake holders and asks them questions about the feature, ideally working through and writing up some concrete examples and coming up with a design if needed. These conversations and examples highlight the reason for the feature. and serve as a powerful motivator for the delivery team.

There is no need to write stories in the language, "As a ____, I want to ____, so I can ____.". The blanks should have been filled in from the conversations and may or may not be useful to write into the story. That's up to the team if they want to document that for posterity or new people joining the team, but it's faster to tell people the reason this story matters to the business and transfer the knowledge that way.

There is no need to agree on a definition of a story being "ready" for the team to work on. If it's at the top of the backlog and needs design work or more details, it's your job to chase that down before coding starts. We don't start coding until we have acceptance criteria that is clear enough for the developer. The developer usually writes up the steps for testing for the tester and product owner and business.

Everyone on the team can write a story. Developers and testers find technical debt, bugs, and UI inconsistencies all the time. The team can prioritize this work, but it is important that it is visible to both the delivery team and the business.

There is no need to separate feature work from bug work. A bug is when the software does something the business doesn't want it to. A feature is when the software doesn't yet do something the business wants it to. There is no need for a blanket policy of "fix all bugs first". The business knows if there are low priority bugs that can wait while a high impact feature is being worked on.

We don't trace user stories to epics and don't usually break them down into tasks. If stories have a common theme, they can be tagged with a label or colored the same color if needed.

Estimating

We don't estimate story sizes. We can tell the business how many stories we average per release or per time period. Using this knowledge and seeing how far back the story is in the backlog and the number of stories in progress, the business can roughly calculate when that bug fix or feature will be in production.

Priorities

Stories are ranked in the backlog in priority order, with the highest priority stories at the top. If the story or bug fix is important, it can be moved up so it is more likely to be in the next release or the one after that.

The business can change the delivery team priorities at any time, and the attitude of the delivery team should be that we're glad to do it because we are there to work with the business to make the software as effective as possible.

Occasionally, there are very high priority bug fixes that need to be made. We can add a swim lane to our online Kanban board for these, and they get expedited treatment to get the fix into production as quickly as possible.

Workflow

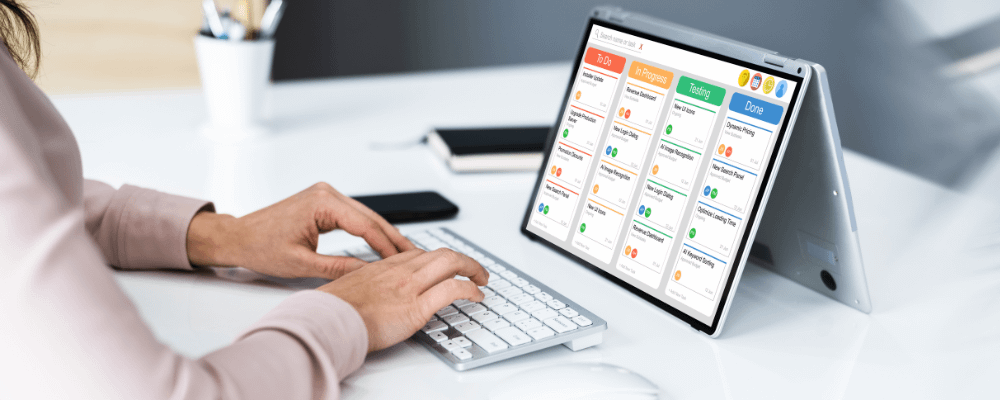

Stories are worked from left to right in the online Kanban board. The leftmost column is the Backlog. The rightmost column is stories pushed to production. The columns in between vary based on the project, but usually include Development, Testing, and Ready for Acceptance Testing, which is a queue where stories sit until the next release to the user acceptance testing environment, and User-Acceptance Testing.

More than one person can be assigned a story. Anyone on the team can move a story, ideally with a comment about why they made the move. We don't both with hard WIP (work in progress) limits, because we socialize that you can truly only work on one thing at a time, and it's OK to have two stories sitting in the Development column if you are waiting for answers on one of them and it may take a few days to get a response.

When testers review developers' work and find issues, they write up enough details for the developer to reproduce the issue, ideally with screen shots or a walkthrough, and the story is moved back to the Development column for fixing. That now becomes that developer's top priority and the fix is usually not time consuming, and then the story move back to Testing with comments from the developer about the fix.

The same process happens with user acceptance testing. If the produce owner or business find a bug or think of a change they want made, the story gets updated and moved back to the Development column. Sometimes the change requested is big enough to warrant a new story which also needs to be prioritized.

Reporting Status

There is no need for daily standups or status reports. We have an online Kanban board that shows all stories in progress, their status, and who is working on them. The delivery team and the business have access to this task board. Everything the delivery team is working on is transparent.

Communication and Meetings

If there are no daily standups or status reports, how do we communicate? Since we work remotely, we do it all online. If you've got an internet connection, you're all set. We mostly use instant messaging tools and escalate to phone or video call or screen sharing as needed. Email and phone calls work, too, but those are secondary and usually used when someone we need to talk with doesn't use the same IM tool as the delivery team.

Stock images make meetings look fun, but synchronous meetings (e.g., 5 of us need to be on a call at 10am today) are expensive and their purpose is often better accomplished in other ways. Instant messaging allows us to have asynchronous meetings, so you can keep working and hop into the discussion when you have time. This avoids context switching and prevents developers and testers from looking at the clock, seeing they have a meeting in 15 minutes, and deciding not to start something new. Software design, development. and testing require uninterrupted thinking for big chunks of time, and async meetings are critical to not disturbing someone's flow.

Blocks and Team Dynamics

If people on the delivery team are blocked (e.g., "I can't access the database.", "How does the business want these buttons to look?", "I'm stuck on some SignalR syntax.", etc.), it's up to those team members to report that right away. Because there is no daily standup, there is no waiting until the next day to mention it. Anyone who can helps the blocked team member or gets them with someone who can help them.

A key assumption here is that the blocked team member will speak up and not quietly flounder for hours or days. We make sure asking for help doesn't come at the price of being teased or humiliated for not knowing something.

Everyone on the team must help other team members. In fact, how this is approached is crucial. If you ask a developer if they need help with something, 9 times out of 10 they will reflexively say no. They want to figure it out on their own, they think they've almost got it, etc. If you instead ask, "How can I help?" or "Why don't we look at this together - it's tricky." or "Can you show me how you did that? I want to learn it too." you'll have more success.

Be humble. Be considerate. Be sincere. Be helpful. Be cheerful.

Development Practices

Testing

We write LOTS of client and server-side unit tests. These tests help ensure the happy path tests cases are covered and that the corner cases have at least been thought through. They also drive a decoupled design where we can mock things as needed that are not directly under test, like database queries that need to return specific data to hit a code path.

These tests become more valuable over time. You can change something in one part of the code base and feel confident you didn't break something you wrote 6 months ago after seeing all the tests pass.

Running the unit tests to check the software is not enough to know we're done, so we also use a combination of integration, end-to-end, and manual testing.

We write some, but not as many integration and end-to-end tests where we're testing database, API calls, or the entire front and back end. These tests are slower to run and harder to maintain with all the setup needed, so we use these kinds of tests only in the most crucial pieces of the app (e.g., the shopping cart better work).

Developers are responsible for manually testing their own code. They wrote it, so they should know where the pitfalls could be. They are also responsible for writing detailed test scripts for team members who will be testing after them. These are usually added to the story. A developer can only move a story once they have done their own manual testing and writing detailed acceptance criteria.

We also have a tester that does manual testing to double-check that the developer didn't forget anything. This manual testing is helpful for limit testing (e.g., try values like –1, 0, or 1), validation testing (e.g., if the field says it's required, it really is), cross-browser testing (e.g., if the developers are using Chrome to code/test, does it also work with Safari on iPads?), and logic testing.

We don't measure test coverage. We have in the past, but we have never known what to do with the data. 100% test coverage isn't a goal. 50% or 75% coverage doesn't tell me we need more tests, or we have enough. It's trivia and adds overhead, so we don't bother any more.

Code Patterns and Refactoring

We develop code patterns for the project by asking all developers for input. Over time, we change our mind about some of those patterns or make updates to tools. If we can, we pull the pattern out as a shared piece of code so it can be changed in one place. If that's not practical, we bring code forward to the new patterns as we work on it.

We refactor code as needed, usually following our own version of the Boy Scout rule (always leave the campground cleaner than you found it). If you open a piece of code to make a small change, leave the rest of the code alone. If you need to make major changes, bring that code up to the latest coding patterns and tools the team is using today.

Because we have extensive test suites, major code changes are not a scary thing for the team.

Pair Programming and Code Reviews

Pair programming is used, but typically on new or complex code or with new team members. Most developers prefer to work through the problem alone, but sometimes that's a little overwhelming and pairing for an hour or so is hugely helpful.

We do code reviews of each other's work, but it's not every for every commit. If a developer is feeling a little unsure about their work or coding patterns or working on something that will be shared by the whole team, a code review makes sense.

Pair programming and code reviews are fantastic ways to socialize coding patterns and check in with developers to make sure they understand and are using the team agreed to development patterns.

Just-in-time Architecture and UI Design

We don't have a big architecture plan before we start the project. We have a rough idea of how it will go, but we try to code in such a way that we have options and can change the architecture just-in-time as needed during development.

For some stories, the UI design is a key to solving the problem. In those cases, we use mockup tools to quickly draft a UI and get feedback from the business and product owner. We cycle through that feedback until we're happy with the design, and then the mockups become attachments in the story so the developer has a template of what the finished product should look like.

UI design mockups can be very powerful when done live with the customer, and the tools are simple enough that ideas can become concrete designs very quickly.

Branching

We don't usually create branches for feature or bug fixes. Everyone works on master. We have branches for releases, like a user-acceptance testing branch and a production branch, so we can hop onto a branch and make a fix and merge it back to the lower branches as needed. We create labels/tags for each release candidate.

Continuous Deployment and Feedback

Every code commit pushes a new release to a development web server. This tests our code integrations and builds, but more importantly, it gives the team's tester, product owner, and any other stake holders a live look at the latest working code.

The delivery team is constantly asking for feedback from the product owner and the business about how the software is working or not working so we can adjust. With custom software, they key to getting what you want is giving the delivery team lots of feedback on the in-progress software. Continuously deployment is the place to take the latest code for a test drive.

Release Management

Time-boxed vs. Feature-driven

Releases can be either time-boxed, where we release everything that's done every 2 weeks, or they can be feature-driven, where we release when a feature is complete. We sometimes compromise and release every 2 weeks unless there is something important that is almostdone, and then we'll wait another couple of days before releasing with that feature. We don't do this every release, and the product owner, business, and delivery team should reach consensus on whether to hold up a release.

Time-boxed releases are nice and steady and give the business an expectation of when they can have working code in production. We use feature toggles to turn off parts of the app that are not ready for production so we can still deploy code with partial features.

We don't build up sprints with points for time-boxed releases because I have seen negative side effects of that approach. Team members finish early and don't want to start a new story because it's for next week's sprint, or team members are working like crazy to finish before the sprint cutoff so their feature makes it into this release. Both go against the idea of having a steady, sustainable pace of work. If we're doing a release and code isn't ready and won't be ready tomorrow, that's OK.

Definition of Done

We agree on a story being done when the acceptance criteria has been met, the code is checked in, there are passing automated tests for the code, detailed steps for testing the story have been written, the manual testing found no remaining issues, and the business accepted the story as done. At any point, a story moved to the right that doesn't meet one of these can be sent back to the left for rework.

Acceptance Testing the Release Candidate and Deploying to Production

We set up a development integration environment, a staging or user-acceptance testing environment, and production. Once a release candidate has been deployed, the product owner and business test those features. Ideally, this is not the first time they have seen these features or bug fixes, and they are confirming they still work as expected in this release candidate and environment.

After the business and product owner give the go ahead that the release candidate looks OK, it's deployed to production.

Process Review and Improvement

Retrospectives

We don't have sprint retrospectives. Any time is a good time for improvement. We have a culture where suggestions are always carefully considered and incorporated where possible, even if they are a little experimental.

Calculations

After a release, we can recalculate our cycle time (how long it takes a story from work starting to installed in production). This, combined with counting the number of stories per release can give the business a simple tool for predicting when a story they have their eye on will land in production.

For example, if we complete an average of 10 stories per release, and the story in question is number 3 in the backlog and there are 6 stories on the board in progress right now, there is a small chance that story will make it into the current release (it would be the 9th story, and we average 10), but there is very good chance that story will be in the next release after the current one.